AI is widely marketed as a shortcut to faster product delivery. Copilots, generative coding tools, and workflow automation platforms promise dramatic improvements in engineering productivity. Developers can generate boilerplate code instantly, summarize repositories, draft documentation, and automate repetitive tasks that previously consumed hours.

This promise has shaped how many organizations approach AI adoption. Leaders often assume that introducing AI into development workflows will eliminate the bottlenecks slowing product delivery. The expectation is straightforward: if engineers can write code faster, products should reach the market faster.

In controlled environments, this assumption holds some truth. Studies show that AI assistants can significantly improve individual task efficiency. For example, a GitHub experiment found that developers using GitHub Copilot completed a coding task 55 percent faster on average compared to those who did not use the tool.

However, improvements in coding speed do not automatically translate into improvements in product delivery. Many teams that adopt AI still face familiar challenges:

- Delivery timelines remain unpredictable

- Product roadmaps slip despite faster development cycles

- Engineering teams spend time revisiting previously shipped features

- Integration and coordination delays continue to slow releases

The reason lies in a fundamental mismatch between what AI optimizes and what product execution actually requires.

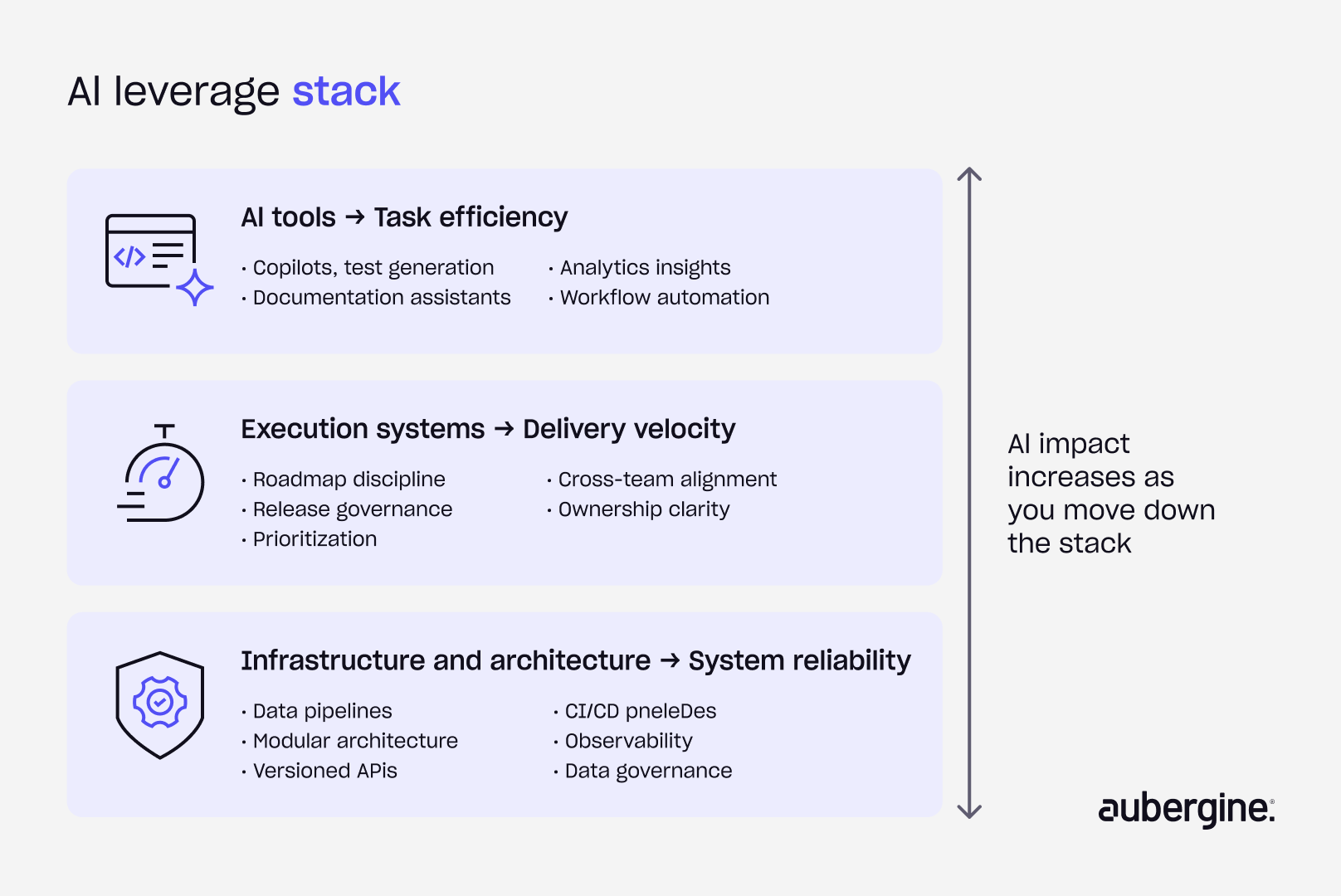

AI primarily improves task efficiency. It helps individuals produce outputs faster. Product execution, however, is a systems problem that depends on coordination across teams, architecture stability, data reliability, and decision-making discipline.

When these systems are stable, AI amplifies productivity. When they are unstable, AI often exposes weaknesses that already exist.

In many organizations, the introduction of AI does not fix broken product execution. It simply makes the cracks more visible.

Why AI fails in real product environments

AI tools are designed to accelerate work. Their effectiveness, however, depends heavily on the environment in which they operate. In real product organizations, several structural issues often limit the impact of AI adoption.

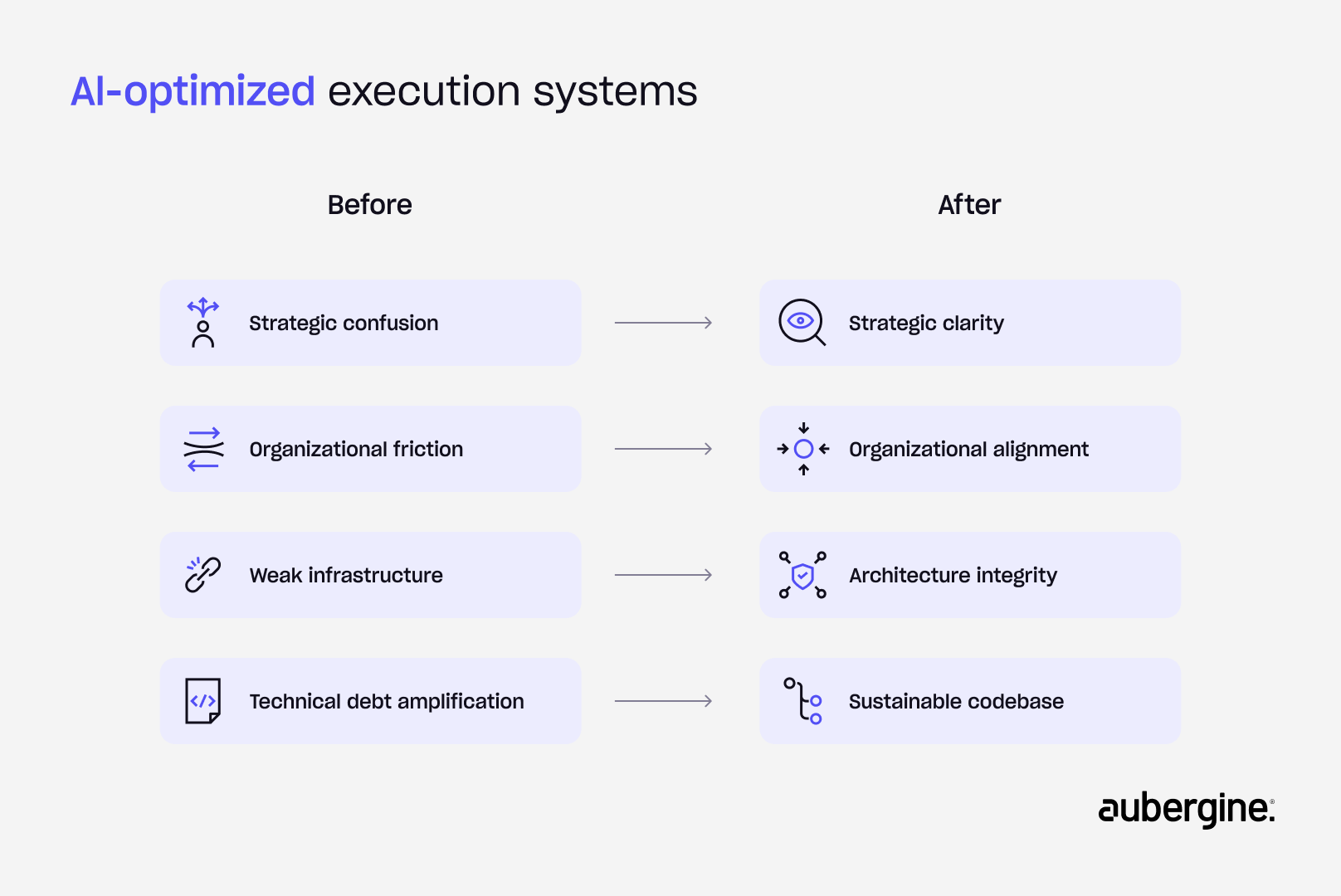

Strategic confusion

AI systems operate within the context they are given. When product direction is unclear, AI simply generates outputs that reflect that uncertainty.

Many organizations struggle with fundamental questions about their product strategy:

- The ideal customer profile changes frequently as the company explores different markets.

- Product roadmaps shift every quarter in response to new priorities.

- Teams build features without a clear long-term product narrative.

- Leadership discussions focus on short-term opportunities rather than strategic positioning.

In these situations, engineering effort becomes reactive. Developers may produce code faster with AI assistance, yet they are still building against unstable product priorities.

AI can generate code, documentation, or feature scaffolding. It cannot determine which problems a product should solve or which roadmap decisions create long-term value.

Organizational friction

Execution problems often appear technical on the surface but originate in organizational structure. AI tools operate within the workflows that teams establish. When coordination across teams is inconsistent, the benefits of automation quickly diminish.

Common friction points include:

- Misalignment between product, engineering, and design teams

- Decisions that stall across functions while ownership is clarified

- Unclear accountability for end-to-end feature delivery

- Dependencies between teams that delay releases

Developers may still produce code quickly with AI support. However, integration delays, review cycles, and cross-team coordination often slow the overall release process.

Research consistently shows that developers spend a large portion of their time navigating these issues. The Atlassian Developer Experience Report 2024 found that developers lose significant time to interruptions, unclear documentation, and coordination overhead rather than actual coding work.

AI does not eliminate these structural challenges. It simply accelerates activity inside the same organizational constraints.

Weak infrastructure

AI tools rely heavily on the quality of the technical environment around them. Many product platforms evolve over several years, accumulating a mix of legacy systems, temporary integrations, and evolving architecture patterns.

In such environments, several infrastructure issues frequently appear:

- Data pipelines produce inconsistent or incomplete datasets.

- Internal systems lack standardized interfaces.

- APIs change without versioning or documentation.

- Services are tightly coupled, making integration fragile.

Automation becomes difficult when the underlying systems are unstable. AI-generated outputs must still interact with these systems. When infrastructure is fragmented, integration work increases rather than decreases.

Technical debt amplification

Technical debt compounds over time in most long-running software systems. AI tools change how this debt evolves.

When architecture is clean and modular, AI can accelerate development without introducing significant complexity. When the codebase is already difficult to navigate, AI-generated code often inherits the same structural problems.

This amplification appears in several ways:

- AI generates new code that follows existing patterns, even if those patterns are inefficient.

- Documentation gaps remain unresolved because historical design decisions were never recorded.

- Quick fixes accumulate faster because AI reduces the friction of adding new code.

Developers may deliver features more quickly in the short term. Over time, however, the complexity of the system continues to increase.

When systems are unstable, AI increases velocity without providing clear direction.

The real bottleneck: execution systems, not intelligence

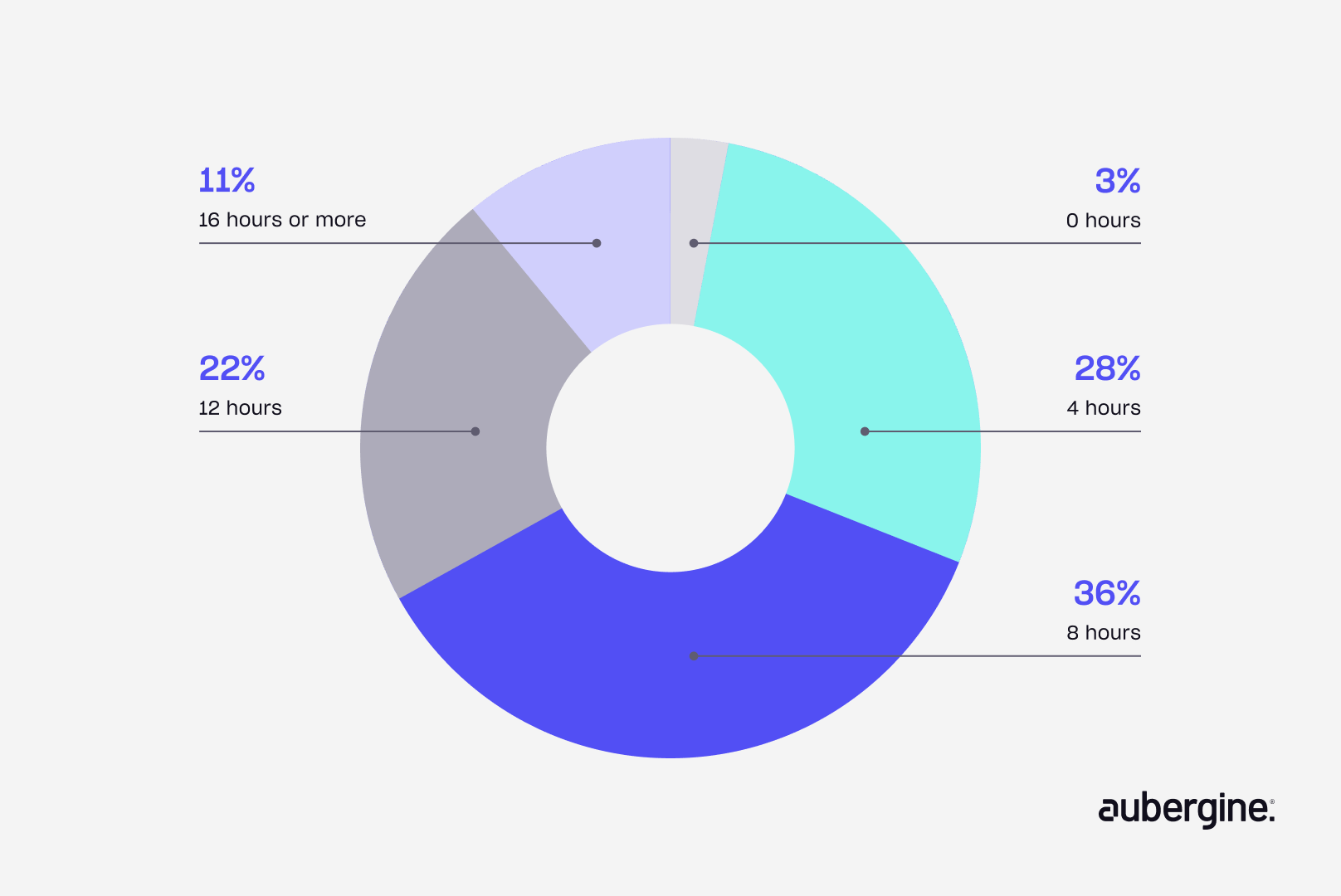

Many conversations about AI assume that the main constraint in engineering teams is developer productivity. The assumption is that if developers write code faster, product delivery will naturally accelerate. However, oftentimes, the real bottleneck is friction in development workflows. In fact, as many as 36% of developers lose as many as 8 hours a week due to inefficiencies.

Source: https://www.atlassian.com/blog/developer/developer-experience-report-2024

However, most engineering organizations are not limited by a lack of intelligence or coding capacity. Developers are already capable of solving complex problems. The real constraint lies in how effectively the surrounding systems support their work.

AI tools are excellent at assisting with specific activities such as:

- Drafting code snippets or boilerplate logic

- Summarizing large repositories or documentation sets

- Generating test cases or implementation suggestions

- Automating repetitive tasks inside development workflows

These capabilities improve individual productivity. Product execution, however, depends on several broader systems.

Strategic clarity that stabilizes product direction

Teams define a consistent ideal customer profile, maintain a roadmap with continuity, and anchor feature development to a clear product narrative. Leadership prioritizes long-term positioning over short-term opportunities. This reduces shifting priorities and ensures that faster AI-assisted output aligns with a stable direction instead of reinforcing uncertainty.

Organizational alignment that reduces coordination overhead

Teams establish clear ownership across product, engineering, and design, with defined decision rights and fewer cross-team dependencies. They streamline handoffs and clarify accountability for end-to-end delivery. This reduces delays caused by misalignment and ensures that faster development translates into faster releases.

Infrastructure standardization that supports reliable execution

Teams enforce stable data pipelines, well-defined APIs with versioning, and consistent interface contracts across systems. They reduce tight coupling and document system behavior. They also implement security controls, access policies, and audit mechanisms that ensure systems meet compliance requirements. This creates an environment where AI-generated outputs integrate cleanly instead of increasing rework due to inconsistencies.

Codebase discipline that controls technical debt growth

Teams maintain clear architectural patterns, document key design decisions, and review AI-generated code against existing standards. They use automated testing, staging environments, and structured deployment processes to keep releases predictable. These teams also work to limit quick fixes and refactor actively to keep systems understandable. This prevents AI from reinforcing poor patterns and slows the accumulation of complexity over time.

Research across the software industry supports this perspective. The Google DORA reports consistently show that high-performing engineering teams succeed not because they write more code, but because they maintain strong delivery systems that support rapid and reliable releases.

Intelligence is rarely the bottleneck in product organizations. Coordination, infrastructure, and decision-making are.

Execution systems determine how effectively intelligence can be applied.

Where AI actually creates value

AI delivers the most value when it operates inside structured and disciplined environments. In these settings, the inputs that AI relies on are consistent and reliable. Codebases follow established patterns, documentation standards exist, and development workflows are predictable.

Several practical use cases illustrate how AI contributes in mature environments.

Automated test generation: When repositories follow clear conventions, AI tools can generate meaningful test cases that improve coverage and overall quality outcomes. Aubergine’s own implementation reduced testing cycle time by 40%.

AI-assisted documentation: Structured codebases allow AI systems to generate summaries of modules, APIs, and workflows, significantly reducing the effort required to maintain internal documentation. In practice, documentation tasks can be completed in roughly half the time.

Insight extraction from analytics: AI can analyze structured analytics datasets to surface patterns in user behavior, system performance, or operational anomalies. This enables teams to act earlier, with studies showing a ~30% reduction in defect escape rates.

Workflow acceleration: Inside well-designed engineering pipelines, AI can accelerate tasks such as dependency management, configuration updates, and incident analysis. This translates into 20–45% productivity gains across engineering workflows.

These applications share a common characteristic: AI operates within a stable execution framework rather than replacing it. It improves efficiency inside mature environments but does not create execution maturity on its own. The strategic question therefore shifts from whether organizations should use AI to whether their foundations are ready to support it effectively.

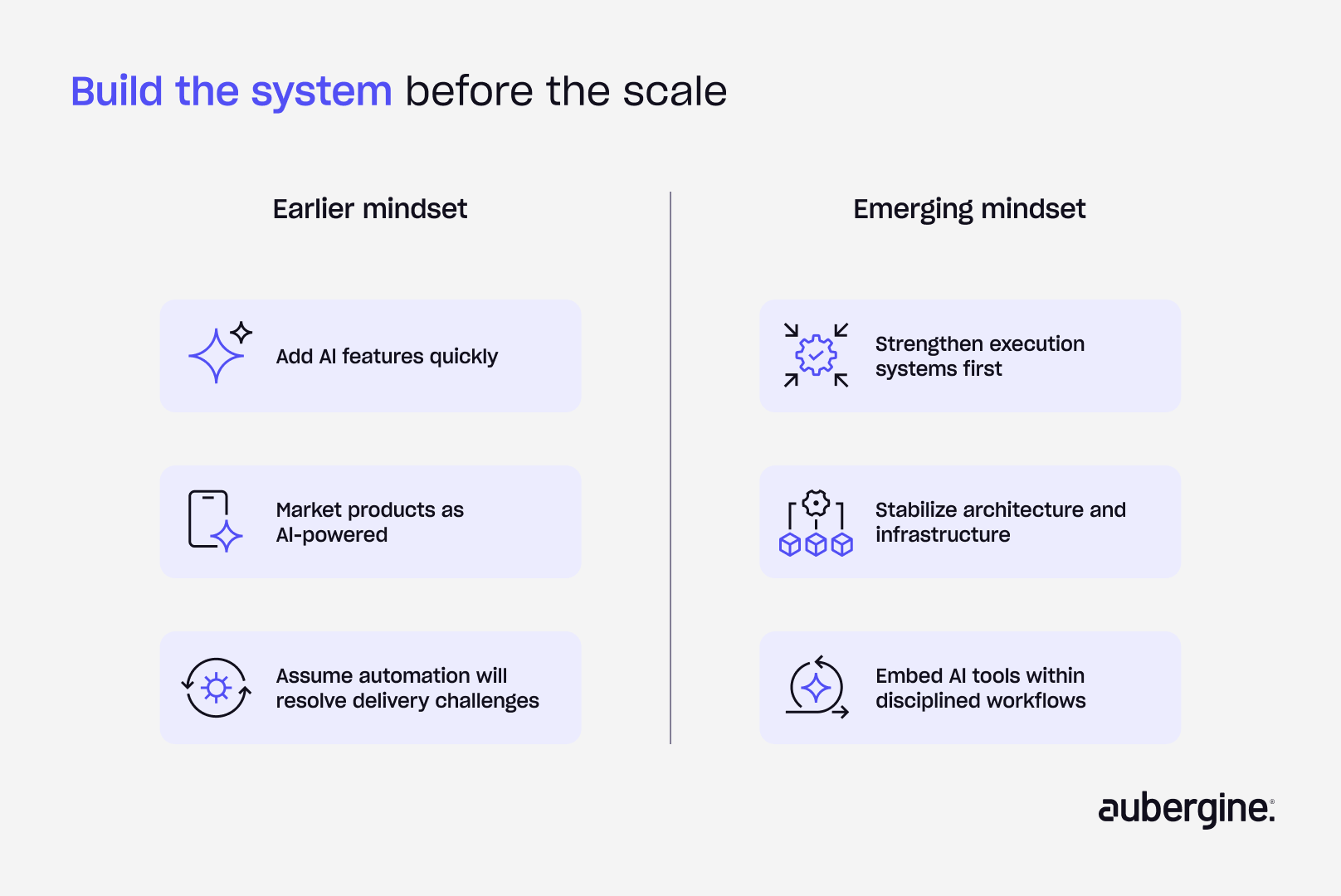

From AI features to execution maturity

The early wave of AI adoption focused heavily on features. Many companies rushed to add AI-powered capabilities to their products and development pipelines. Marketing narratives often emphasized automation and speed.

A more mature perspective is now emerging.

Organizations that successfully integrate AI tend to shift their focus away from individual tools and toward execution systems.

The contrast between the two approaches is becoming clearer.

AI will not fix product execution by itself. However, strong execution systems unlock the real value of AI.

Leaders evaluating their readiness for AI can start with a few practical questions:

- Is the organization’s data infrastructure reliable enough to support automation?

- Are release cycles predictable and well governed?

- Can new engineers contribute meaningfully within a few days of joining?

- Would product delivery remain stable if a key team member left the organization?

The value of AI in engineering isn’t theoretical, it shows up clearly when the underlying systems are structured to support it. Across testing, documentation, analytics, and workflows, the gains are measurable, repeatable, and compounding.

At Aubergine, we focus on embedding AI into the way execution actually happens. One example is how we have systematized the transition from design to development. Our workflows translate Figma designs directly into structured epics and user stories, complete with defined scopes and acceptance criteria. This removes the ambiguity that typically exists between design, product, and engineering, ensuring that teams start with clarity rather than interpretation. Instead of relying on manual handoffs or fragmented documentation, teams work from a consistent, system-driven foundation that reduces back-and-forth and improves execution predictability.

This approach reflects a broader shift from using AI as a layer on top of execution to integrating it into the foundation itself.

The real opportunity, then, isn’t just adopting AI. It’s building the kind of engineering foundation where AI can consistently deliver value you can measure.

.webp)